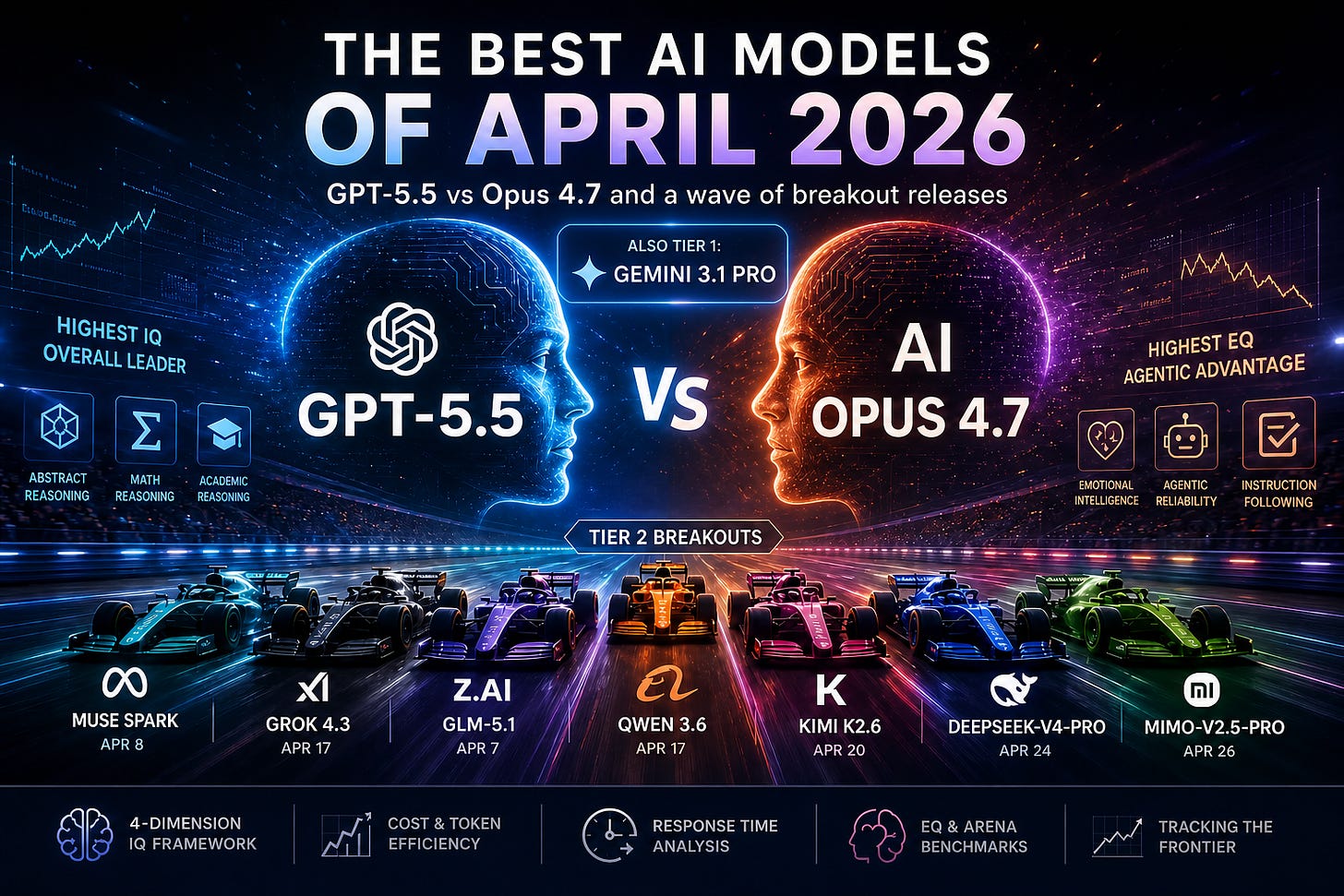

The Best AI Models of April 2026

GPT-5.5 vs Opus 4.7 — and the month the Tier 2 field exploded

April 2026 was the most important month for AI model releases so far this year.

OpenAI released GPT-5.5. Anthropic released Claude Opus 4.7. Meta came back into the race with Muse Spark. xAI shipped Grok 4.3. And the Chinese frontier labs delivered a full wave of serious models: GLM-5.1, Qwen3.6, Kimi K2.6, DeepSeek-V4-Pro, and MiMo-V2.5-Pro.

The simple story is that GPT-5.5 and Opus 4.7 are now the two new co-champions of the frontier.

The more interesting story is that April was not one race. It was three races happening at once.

The first was the raw intelligence race, where GPT-5.5 moved into first place on AI IQ’s overall estimated IQ ranking.

The second was the agentic reliability race, where Opus 4.7 made a strong case that the most useful AI model is not always the one with the highest abstract score, but the one you most trust to carry work through messy, long-running workflows.

The third was the cost-performance race, where Chinese labs continued to compress the gap between “frontier” and “cheap enough to use everywhere.”

The frontier did not commoditize in April. GPT-5.5, Opus 4.7, and Gemini 3.1 Pro are still meaningfully ahead of the rest.

But Tier 2 did commoditize.

That is the real story.

April was the month agentic AI became the default benchmark

A year ago, model launches were still mostly judged by chat quality, coding snippets, MMLU-style knowledge tests, and a handful of math benchmarks.

That is no longer enough.

The models released in April were overwhelmingly marketed around agents: coding agents, research agents, computer-use agents, tool-use agents, long-horizon agents, and multi-agent orchestration.

OpenAI described GPT-5.5 as a model for “real work,” emphasizing coding, online research, data analysis, document and spreadsheet creation, software operation, and multi-tool task completion. OpenAI also reported GPT-5.5 at 82.7% on Terminal-Bench 2.0, 84.9% on GDPval, 51.7% on FrontierMath Tier 1–3, and 35.4% on FrontierMath Tier 4.

Anthropic’s Opus 4.7 launch told a similar story from a different angle: complex coding work, long-running tasks, instruction-following, vision improvements, and stronger self-verification. Anthropic made Opus 4.7 generally available on April 16, 2026, at the same API price as Opus 4.6: $5 per million input tokens and $25 per million output tokens.

The Chinese labs leaned even harder into long-horizon autonomy. Z.ai described GLM-5.1 as a model designed for long-horizon tasks that can work independently for up to eight hours in a single run. Kimi K2.6 is positioned as an open-source multimodal agentic model for long-horizon coding, autonomous execution, coding-driven design, and swarm-based orchestration. DeepSeek-V4-Pro ships with 1M context and a 1.6T-parameter / 49B-active MoE architecture. Xiaomi’s MiMo-V2.5-Pro is also a 1M-context, open-sourced MoE model, built for complex software engineering and long-horizon tasks.

This is why the AI IQ framework matters. The old way to compare models was to ask, “Which model got the highest score on the benchmark?”

The better question now is: which model is best for the kind of cognition you actually need?

AI IQ breaks that into four dimensions: Abstract Reasoning, Mathematical Reasoning, Programmatic Reasoning, and Academic Reasoning. The composite IQ is the mean of those dimensions, with easier or more gameable benchmarks compressed so they cannot dominate the final score.

That compression is important. A model should not become “the smartest model” just because it crushes a saturated or contaminated benchmark. The frontier should be judged by hard, still-discriminating tests.

The new Tier 1: GPT-5.5, Opus 4.7, and Gemini 3.1 Pro

AI IQ’s updated ranking now has three Tier 1 models:

GPT-5.5

Claude Opus 4.7

Gemini 3.1 Pro

Google did not release a major new model in April, but Gemini 3.1 Pro remains in the top cluster. That is important. The April narrative is not “OpenAI and Anthropic left everyone behind.” It is more precise than that: OpenAI and Anthropic refreshed into the frontier, while Google’s previous frontier model still held its ground.

The top-level read:

GPT-5.5 is the overall intelligence champion. It sits at the top of the AI IQ ranking and leads the April class on raw composite capability.

Opus 4.7 is the emotional intelligence and agentic trust champion. It does not beat GPT-5.5 on overall IQ, but it leads on EQ and remains one of the most compelling models for long-running professional work.

Gemini 3.1 Pro is the holdover giant. It did not need an April launch to remain relevant. On the AI IQ charts, it continues to sit in the frontier cluster and is especially strong in programmatic reasoning.

The frontier is now less like a single leaderboard and more like a three-model draft board. For any serious workflow, the right answer is usually not “use the highest-ranked model.” It is:

Use GPT-5.5 when you want the strongest general reasoner.

Use Opus 4.7 when you want the best blend of intelligence, EQ, instruction-following, and long-horizon reliability.

Use Gemini 3.1 Pro when you care about Google-stack integration, coding strength, multimodal workflows, or cost-performance tradeoffs inside that ecosystem.

GPT-5.5: the new raw intelligence leader

GPT-5.5 is the most important release of April.

On AI IQ, it takes the top overall spot. It also leads the April releases in Abstract Reasoning, Mathematical Reasoning, and Academic Reasoning. That is the core of its story: GPT-5.5 is not merely a coding model, a chat model, or a tool-use model. It is the broadest new general-purpose intelligence system in the April wave.

OpenAI’s own launch framing matches the AI IQ read. GPT-5.5 is presented as a model that can plan, use tools, check its work, navigate ambiguity, and keep going across multi-part tasks. OpenAI also emphasizes that GPT-5.5 matches GPT-5.4 per-token latency while performing at a higher level and using fewer tokens on Codex tasks.

But AI IQ adds an important correction: GPT-5.5 is not the obvious cost-performance winner.

The model is expensive. OpenAI lists future API pricing for gpt-5.5 at $5 per million input tokens and $30 per million output tokens, with GPT-5.5 Pro priced far higher at $30 input and $180 output.

So the practical recommendation is not “use GPT-5.5 for everything.”

It is: use GPT-5.5 when the marginal cost of being wrong is high.

That means difficult research, complex analysis, high-stakes coding tasks, scientific reasoning, mathematical reasoning, architecture decisions, and work where the model’s ability to notice hidden structure matters more than token price.

GPT-5.5 is the model you reach for when you are buying judgment.

Opus 4.7: the model people may actually trust more

If GPT-5.5 is the IQ winner, Opus 4.7 is the “would I hand this work to it?” winner.

On AI IQ’s EQ ranking, Anthropic continues to dominate. Opus 4.7 sits at the top of the EQ chart, ahead of GPT-5.5 and the rest of the April field. That matters more than people think.

EQ is not just “being nice.” In professional AI use, it often shows up as calibration, tone, judgment, refusal discipline, conversational tact, knowing when the user is confused, knowing when to push back, and knowing when a task needs clarification versus execution.

AI IQ estimates EQ from EQ-Bench 3 and Arena Elo, then maps those signals to the same normalized style of scale used for IQ. Anthropic models receive a 200-point EQ-Bench adjustment because EQ-Bench is Claude-judged, which makes Opus 4.7’s top placement more notable rather than less.

Anthropic’s launch post reads like a long list of enterprise users saying the same thing in different words: Opus 4.7 is better at the parts of work that happen after the first answer. It catches mistakes, follows instructions more literally, handles long-running workflows, uses tools more reliably, and produces stronger professional artifacts.

That is the real distinction between GPT-5.5 and Opus 4.7.

GPT-5.5 looks like the smartest model.

Opus 4.7 often looks like the better coworker.

For teams building agents, that distinction is not cosmetic. The best agent is not always the one with the highest peak score. It is the one that does not quietly drift, loop, overcomplicate, ignore constraints, or hand back a plausible but unverified answer after 40 minutes of tool calls.

Opus 4.7’s strongest case is not that it beats GPT-5.5 everywhere. It does not.

Its strongest case is that the frontier is now close enough that trust, EQ, and workflow stability become first-order selection criteria.

The surprise: Tier 2 is now crowded with genuinely useful models

The April Tier 2 list is where the market changed most.

AI IQ’s updated Tier 2 includes:

Grok 4.3

Kimi K2.6

DeepSeek-V4-Pro

Muse Spark

Qwen3.6

MiMo-V2.5-Pro

GLM-5.1

That is a lot of serious models in one month.

None of these cleanly displaces GPT-5.5, Opus 4.7, or Gemini 3.1 Pro as the best overall model. But that is the wrong bar. The point is that Tier 2 is now good enough to matter for production routing.

A year ago, “use the best model” was often a reasonable default.

In May 2026, that is lazy.

If a task is cheap, repetitive, narrow, or tolerant of a small quality drop, you should probably not be sending it to the most expensive frontier model. The cost-performance charts on AI IQ make this especially clear because they do not just plot sticker price. They use effective cost: token cost multiplied by token usage efficiency. AI IQ anchors token cost to a 2M input / 1M output workload, then adjusts by how many tokens the model burns on the Artificial Analysis evaluation suite.

That framing changes the conversation. Some models look cheap but waste tokens. Others look expensive but are efficient enough that the real gap is smaller. And some models are simply cheap enough that they should be in every serious evaluation harness.

The best April examples are Kimi K2.6, DeepSeek-V4-Pro, and MiMo-V2.5-Pro.

Kimi K2.6 is the cleanest open-source story: native multimodal, agentic, long-horizon coding, coding-driven design, and swarm orchestration.

DeepSeek-V4-Pro is the scale-and-efficiency story: 1.6T total parameters, 49B active, 1M context, and a claim of performance rivaling top closed models.

MiMo-V2.5-Pro is the “local/open agentic frontier” story: 1.02T total parameters, 42B active, 1M context, open-sourced weights, and strong long-horizon coding demonstrations.

The frontier labs still own the top.

But the rest of the market now has options.

Dimension-by-dimension winners

The cleanest way to understand April is to stop asking “which model is best?” and ask “best at what?”

Best overall IQ: GPT-5.5

GPT-5.5 is the overall AI IQ leader. It is the model to beat on broad benchmark-derived intelligence.

Its advantage is not confined to one dimension. It is especially strong across abstract, mathematical, and academic reasoning, which makes it the most credible default when the task is hard to classify.

For professionals, this matters because many high-value tasks are mixed-domain. A research memo might require reading dense technical material, checking a mathematical argument, writing code to test an assumption, and then producing a clean executive summary. The more mixed the task, the more valuable broad IQ becomes.

Best EQ: Opus 4.7

Opus 4.7 leads the EQ ranking.

This makes it the best candidate for emotionally sensitive communication, high-context collaboration, writing with judgment, ambiguous stakeholder-facing work, coaching, editing, and situations where the model needs to push back without becoming annoying.

EQ also matters in agents. A low-EQ model can be technically correct but operationally painful: too verbose, too agreeable, too brittle, too literal in the wrong places, or too eager to satisfy a bad instruction.

Opus 4.7’s advantage here is one reason it will likely remain the default for many “AI coworker” workflows even when GPT-5.5 leads on raw IQ.

Best abstract reasoning: GPT-5.5

Abstract reasoning is the closest AI IQ dimension to raw fluid intelligence: the ability to solve novel problems without relying heavily on memorized knowledge. AI IQ uses ARC-AGI-2 and ARC-AGI-1 for this dimension, with ARC-AGI-2 treated as the harder, more frontier-discriminating test.

GPT-5.5 leads the April class here.

That is important because abstract reasoning is one of the hardest capabilities to fake. A model can memorize facts. It can overfit public code tasks. It can get better at common math formats. But novel abstraction is much harder to brute-force through training contamination.

If you want the model most likely to notice the hidden pattern in a new problem, GPT-5.5 is the current pick.

Best mathematical reasoning: GPT-5.5, with GPT-5.3-Codex still looming

Among April’s general-purpose model releases, GPT-5.5 is the math leader.

AI IQ’s math dimension uses FrontierMath Tier 4 and AIME, with AIME compressed because of contamination and saturation concerns. That is the right call. A perfect or near-perfect AIME score no longer tells us as much as it once did.

The interesting wrinkle is that GPT-5.3-Codex remains extremely strong on mathematical reasoning in the AI IQ charts. That suggests OpenAI’s coding-specialist line is not just a coding specialist. It may also be a very strong formal reasoning system.

For most users, GPT-5.5 is the best general math choice. But for code-adjacent math, theorem work, formalization, and technical problem-solving inside a development workflow, GPT-5.3-Codex still deserves attention.

Best programmatic reasoning: Gemini 3.1 Pro overall; GPT-5.5 among April releases

The programmatic reasoning chart is one of the most interesting on AI IQ because it does not simply reward SWE-Bench.

AI IQ’s programmatic dimension combines Terminal-Bench 2.0, SWE-Bench Verified, and SciCode, with SWE-Bench compressed because of leakage and gameability concerns.

That makes the ranking more useful than a simple “who wins SWE-Bench?” scoreboard.

Gemini 3.1 Pro remains one of the strongest models overall on programmatic reasoning. Among the April releases, GPT-5.5 is the strongest broad programmatic reasoner, with Opus 4.7 also highly competitive.

Kimi K2.6 is the one to watch here. It does not win the chart, but its open-source, agentic-coding positioning makes it strategically important. The question is not whether Kimi K2.6 beats GPT-5.5 on every coding benchmark. It does not. The question is whether it is good enough, cheap enough, and controllable enough to become the default model for large volumes of coding-agent work.

That answer may be yes for many teams.

Best academic reasoning: GPT-5.5

GPT-5.5 also leads the April field on academic reasoning.

AI IQ’s academic dimension includes Humanity’s Last Exam, CritPt, and GPQA Diamond, with GPQA compressed due to contamination concerns.

This is where GPT-5.5’s breadth matters most. It is not just answering common questions better. It is performing well across expert-level, hard-to-game, high-breadth benchmarks.

DeepSeek-V4-Pro and Muse Spark are the Tier 2 standouts here. DeepSeek-V4-Pro’s knowledge and reasoning profile makes it one of the strongest Chinese models on academic-style tasks, while Muse Spark gives Meta a surprisingly credible return to high-end reasoning.

Meta’s launch emphasized Muse Spark as a natively multimodal reasoning model with tool use, visual chain of thought, and multi-agent orchestration, and reported strong results from its Contemplating mode on hard tasks like Humanity’s Last Exam and FrontierScience Research.

Muse Spark is not Tier 1 yet. But it is the first Meta model in a while that looks like it belongs in the serious frontier conversation.

The cost-performance story: the smartest model is not always the right model

AI IQ’s cost charts may be more practically important than the IQ chart.

The reason is simple: most real-world AI usage is not one heroic prompt. It is thousands or millions of calls across support workflows, coding agents, research loops, RAG systems, data extraction jobs, document workflows, and internal automations.

At that scale, the question changes from:

“Which model is best?”

to:

“Where does extra intelligence stop paying for itself?”

GPT-5.5 and Opus 4.7 justify their cost when the task is difficult, ambiguous, or high-value. But for many workflows, the April Tier 2 models are now strong enough to route into production.

A sensible model stack in May 2026 looks something like this:

Use GPT-5.5 for the hardest reasoning, research, math, architecture, and high-stakes synthesis.

Use Opus 4.7 for long-horizon agents, collaborative writing, sensitive communication, and workflows where reliability and tone matter.

Use Gemini 3.1 Pro where its programmatic strength, Google ecosystem, or multimodal tooling gives it an edge.

Use Kimi K2.6, DeepSeek-V4-Pro, MiMo-V2.5-Pro, Qwen3.6, GLM-5.1, or Grok 4.3 for cheaper routing, open-weight experimentation, local or sovereign deployment, and high-volume agentic workloads.

The best teams will not pick one model.

They will build routers.

The biggest surprise: China’s labs are not just copying the frontier — they are specializing around deployment

The April Chinese model wave was not random.

GLM-5.1, Qwen3.6, Kimi K2.6, DeepSeek-V4-Pro, and MiMo-V2.5-Pro all push toward the same broad theme: long context, agentic workflows, coding, tool use, and lower-cost deployment.

This is not just a benchmark race. It is a distribution strategy.

OpenAI and Anthropic are selling premium intelligence.

Chinese labs are increasingly selling frontier-adjacent capability that can be deployed more broadly, customized more deeply, and routed more aggressively.

The gap at the very top remains real. GPT-5.5 and Opus 4.7 are still better models overall.

But the gap below the frontier is shrinking fast.

That matters because most economic value from AI will not come from asking the single hardest question. It will come from running useful intelligence everywhere: every repo, every spreadsheet, every customer thread, every document workflow, every internal system, every agent harness.

In that world, the winner is not always the model with the highest IQ.

It is the model with the best intelligence per dollar, per second, per workflow, per failure mode.

What to watch next

The first thing to watch is whether GPT-5.5’s lead holds once more third-party data arrives. OpenAI’s own numbers are impressive, but AI IQ’s methodology is designed to normalize across benchmarks and penalize overreliance on gameable tests. That distinction will matter more as labs optimize for public leaderboards.

The second thing to watch is whether Opus 4.7 becomes the default model for serious agentic coding despite GPT-5.5’s overall IQ lead. If developers and enterprises keep reporting that Opus is easier to trust over long runs, Anthropic may continue to win workflows even when OpenAI wins charts.

The third thing to watch is Gemini. Google was quiet in April, but Gemini 3.1 Pro remains Tier 1. A Gemini 3.2 or Gemini 4 release would immediately reset the frontier.

The fourth thing to watch is open-weight agentic models. Kimi K2.6, DeepSeek-V4-Pro, and MiMo-V2.5-Pro are not just “cheap alternatives.” They are the beginning of a world where frontier-adjacent agents can be run, tuned, routed, hosted, and governed outside the closed-model APIs.

The fifth thing to watch is cost. AI IQ’s effective-cost framing is going to become more important every month. Sticker price is not enough. A model that uses fewer tokens, finishes faster, retries less, and fails less often can be cheaper in practice even when its posted token price is higher.

Bottom line

April 2026 gave us a new model hierarchy.

GPT-5.5 is the best overall model.

Opus 4.7 is the best EQ and agentic trust model.

Gemini 3.1 Pro remains a Tier 1 holdover.

Kimi K2.6, DeepSeek-V4-Pro, Muse Spark, Grok 4.3, Qwen3.6, MiMo-V2.5-Pro, and GLM-5.1 make Tier 2 much more competitive than it was a month ago.

The frontier is still a premium market.

But the middle of the market just got much smarter.

That is what professionals should take away from April. Not that one lab won. Not that one leaderboard settled the race. Not that open models caught the frontier.

The real lesson is that model choice is now a portfolio decision.

Use the smartest model when intelligence is the bottleneck.

Use the most emotionally intelligent model when trust and collaboration are the bottleneck.

Use the cheapest good-enough model when scale is the bottleneck.

And revisit the decision every month.

Because in AI, April 2026 was not a normal month.

It was a warning shot.