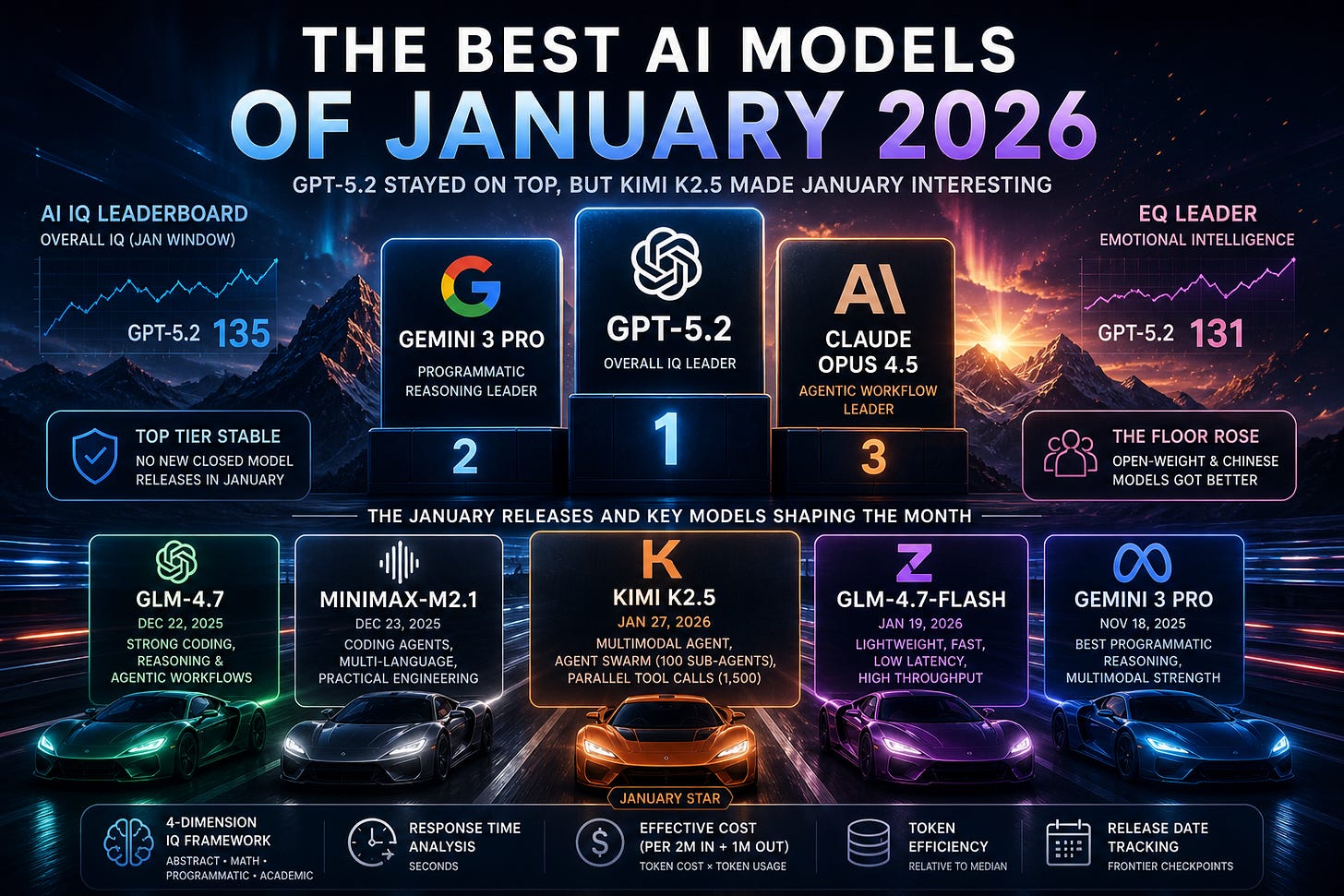

The Best AI Models of January 2026

GPT-5.2 stayed on top, but Kimi K2.5 made January interesting

January 2026 was not a clean frontier-reset month.

OpenAI did not release GPT-5.3. Anthropic did not release Opus 4.6. Google did not release Gemini 3.1. The very top of the leaderboard mostly stayed where December left it: GPT-5.2 and Gemini 3 Pro out in front, with Opus 4.5 still one of the most important models for coding agents and professional work.

That made January easy to underrate.

The month’s real action was one layer down. Kimi K2.5 was the major new model release. Z.ai shipped GLM-4.7-Flash. And several late-December models — especially GLM-4.7 and MiniMax-M2.1 — spent January getting benchmarked, integrated, and compared against the closed frontier.

So January was not about a new best model.

It was about the rest of the stack getting more useful.

January 2026 model releases

The corrected release calendar is important here.

GLM-4.7 and MiniMax-M2.1 were not January releases. GLM-4.7 was released on December 22, 2025, according to Z.ai’s release notes, and MiniMax-M2.1 was released on December 23, 2025, according to MiniMax’s own launch post. They mattered a lot in January, but they should be treated as late-December models that shaped the January rankings, not as January launches.

The true January model-release story was narrower:

January 19: Z.ai released GLM-4.7-Flash

GLM-4.7-Flash was a lighter, lower-latency version of GLM-4.7, designed as the free-tier model. Z.ai positioned it around coding, reasoning, writing, translation, roleplay, high-throughput use cases, and real-time interaction.

January 27: Moonshot released Kimi K2.5

Kimi K2.5 was the month’s most important new model. Moonshot described it as a native multimodal, open-source agentic model trained with continued pretraining over roughly 15T mixed visual and text tokens. It also introduced Agent Swarm, where the model can coordinate up to 100 sub-agents and run parallel workflows across up to 1,500 tool calls.

That is a quieter month than April would later become. But it was still useful. January clarified which models were actually worth testing below the top frontier tier.

The top tier barely moved

The best model available by the end of January was still GPT-5.2.

OpenAI had released GPT-5.2 in December as its most advanced model for professional work and long-running agents, with strong reported results across software engineering, math, abstract reasoning, science, long-context work, spreadsheets, presentations, coding, tool use, and multi-step projects.

Gemini 3 Pro was still right behind it. Google released Gemini 3 in November as its most intelligent model, with emphasis on reasoning, multimodality, coding, and agentic workflows.

Claude Opus 4.5 remained highly relevant, especially for coding agents, tool use, computer use, spreadsheets, and long-running professional work. Anthropic released it in November and described it as its best model for coding, agents, and computer use, with a lower Opus API price of $5 per million input tokens and $25 per million output tokens.

So the January headline is not “new model beats GPT.”

It is more specific: the top stayed mostly closed, but the next layer got much more interesting.

The best January release: Kimi K2.5

Kimi K2.5 was the clear standout January release.

It did not take the overall AI IQ crown from GPT-5.2. It did not make Gemini 3 Pro irrelevant. It did not erase Opus 4.5’s agentic-workflow strengths.

But it did make the open-weight category harder to ignore.

The model’s pitch was unusually product-shaped. Kimi K2.5 was not only a text reasoning model with better benchmark results. It was native multimodal. It could reason over images and video. It could code from visual inputs. It had instant, thinking, agent, and agent-swarm modes. And the Agent Swarm system made a serious attempt at scaling agentic work horizontally instead of just asking one model to think longer.

That last point is the interesting one.

Most agentic AI still runs as one long loop: plan, call tool, observe, revise, call another tool, and repeat. Kimi K2.5’s Agent Swarm design instead asks whether some tasks should be decomposed into many parallel subtasks. Moonshot reports that the system can create up to 100 sub-agents and reduce execution time by up to 4.5x compared with a single-agent setup.

That does not automatically make Kimi K2.5 the best model. It does make it one of the more strategically interesting January releases.

For high-stakes synthesis, GPT-5.2 still made more sense. For top-tier programmatic reasoning, Gemini 3 Pro remained extremely strong. For long-running coding-agent work, Opus 4.5 still had a strong case.

But for teams that care about open weights, multimodal agents, cost control, visual coding, and parallelized workflows, Kimi K2.5 became a model worth testing.

GLM-4.7-Flash: not a frontier reset, but useful

GLM-4.7-Flash was not the most important model of January. Kimi K2.5 was.

But GLM-4.7-Flash still mattered because it pointed at a different part of the market: high-frequency, lower-latency usage.

Z.ai described GLM-4.7-Flash as a lightweight and efficient model designed as the free-tier version of GLM-4.7, with strong performance across coding, reasoning, and generative tasks. The release was explicitly framed around low latency, high throughput, writing, translation, roleplay, and real-time use cases.

That is not glamorous, but it is important.

A lot of real AI usage does not need the best model. It needs a model that is good enough, fast enough, and cheap enough to run constantly. Customer support drafts, internal assistants, low-stakes code edits, document cleanup, translation, lightweight research, routing, summarization, and agent pre-processing do not all need GPT-5.2.

GLM-4.7-Flash was not competing to be the smartest model in the world. It was competing to be useful at scale.

That is a different race, and January had more of that than a simple leaderboard would suggest.

The late-December models that shaped January

The reason January felt busier than its release calendar is that two important late-December releases were still being absorbed by the ecosystem.

GLM-4.7 was released on December 22, 2025. Z.ai described it as a foundation model with improvements in coding, reasoning, agentic capabilities, long-context understanding, and end-to-end task execution across real-world development workflows.

MiniMax-M2.1 was released on December 23, 2025. MiniMax emphasized real-world complex tasks, multi-language programming, office workflows, lower token consumption, faster response speed, and better generalization across coding-agent frameworks like Claude Code, Droid, Cline, Kilo Code, Roo Code, and BlackBox.

These models should not be listed as January launches. But they absolutely belong in a January model analysis, because January was when teams had time to test them against the frontier.

GLM-4.7 was the stronger general open-weight reasoning and coding story.

MiniMax-M2.1 was the practical coding-agent story: less about one heroic score, more about multi-language software work, scaffold compatibility, token efficiency, and whether a model behaves well inside actual coding tools.

That distinction matters. Real coding agents do not live inside one benchmark. They live inside harnesses, repos, terminals, IDEs, issue trackers, and messy project context.

Updated January model rankings

Using the AI IQ January window, the model hierarchy looked roughly like this.

Tier 1 / top frontier

GPT-5.2

The best overall model available by the end of January. Strong across broad reasoning, math, academic work, software engineering, tool use, and professional tasks.

Gemini 3 Pro

Still one of the strongest models overall, and especially strong on programmatic reasoning and multimodal workflows.

High-end professional / frontier-adjacent

Claude Opus 4.5

Not the overall AI IQ leader, but still one of the most important models for coding agents, computer use, long-running tasks, spreadsheets, and professional workflows.

Kimi K2.5

The best true January release. Not top overall, but the most interesting new open-weight agentic model of the month.

GLM-4.7

A late-December release that remained highly relevant in January for open-weight coding, reasoning, and agentic development workflows.

MiniMax-M2.1

Also a late-December release, but important in January for coding-agent workflows, multi-language programming, and practical deployment.

GLM-4.7-Flash

The January speed-and-throughput release. Less important for the top leaderboard, more important for cheap, frequent, lower-latency usage.

That hierarchy is less clean than a single leaderboard, but it is more useful.

January was not about replacing GPT-5.2. It was about giving teams more credible models below it.

Dimension-by-dimension read

AI IQ evaluates models across four cognitive dimensions: Abstract Reasoning, Mathematical Reasoning, Programmatic Reasoning, and Academic Reasoning. The composite IQ is the average of those dimensions, with easier or more gameable benchmarks compressed so they cannot dominate the final score.

That framework is especially helpful for January because the new and recently released models had different shapes.

Best overall IQ: GPT-5.2

GPT-5.2 was still the best overall model in the January window.

Its advantage was breadth. It was not only a coding model, math model, or chat model. It remained the safest default for mixed professional tasks: reading dense material, reasoning through tradeoffs, writing code, checking math, working with long context, and producing polished outputs.

For general high-value work, GPT-5.2 was the default pick.

Best January release overall: Kimi K2.5

Among true January releases, Kimi K2.5 had the strongest overall story.

Its benchmark profile was good, but the more interesting thing was the model shape: multimodal input, coding, visual debugging, agentic execution, office productivity, and parallel sub-agent orchestration.

It was not the best model in the world. But it was the January release most likely to change what serious teams tested.

Best abstract reasoning: GPT-5.2

GPT-5.2 remained the January-window leader on abstract reasoning.

That matters because abstract reasoning is one of the harder capabilities to explain away through benchmark familiarity. AI IQ’s abstract reasoning dimension uses ARC-AGI-2 and ARC-AGI-1, with ARC-AGI-2 treated as the harder, more frontier-discriminating benchmark.

Kimi K2.5 and GLM-4.7 were useful, but they did not close the gap at the very top.

Best mathematical reasoning: GPT-5.2

GPT-5.2 was also the math leader in the January window.

AI IQ’s math dimension uses FrontierMath Tier 4 and AIME, with AIME compressed because it is easier to saturate and more exposed to contamination. That is a better signal than simply asking which model got the highest AIME score.

Kimi K2.5 was strong for an open-weight January release, but GPT-5.2 still had the broader mathematical reasoning profile.

Best programmatic reasoning: Gemini 3 Pro

Gemini 3 Pro had the strongest case on programmatic reasoning among models available by the end of January.

This is one place where “best overall” and “best for a specific task type” diverge. GPT-5.2 led overall, but Gemini 3 Pro remained extremely competitive on coding-heavy and programmatic tasks.

AI IQ’s programmatic dimension is also more useful than a simple SWE-Bench leaderboard because it combines Terminal-Bench 2.0, SWE-Bench Verified, and SciCode, while compressing SWE-Bench due to leakage and gameability concerns.

Among the January-related models, Kimi K2.5 was the most important new programmatic entrant. GLM-4.7 and MiniMax-M2.1 were also worth testing, especially for teams that cared about open-weight deployment and coding-agent workflows.

Best academic reasoning: Gemini 3 Pro, with GPT-5.2 close

Academic reasoning was one of the tighter parts of the January top tier.

Gemini 3 Pro had the strongest case on academic reasoning in the January window, with GPT-5.2 close behind. Both were meaningfully ahead of the true January releases on broad expert-knowledge tasks.

AI IQ’s academic reasoning dimension includes Humanity’s Last Exam, CritPt, and GPQA Diamond, with GPQA compressed because public graduate-level science benchmarks are easier to contaminate than newer expert-screened tests.

Kimi K2.5 was still impressive, especially with tools. But the best academic models were still the late-2025 closed frontier models.

Best EQ: GPT-5.2

In AI IQ’s January-window view, GPT-5.2 had the strongest EQ profile.

That may surprise people who associate Claude with the best conversational feel. But AI IQ’s EQ score is not just vibes. It combines EQ-Bench 3 Elo and Arena Elo, maps them onto an EQ-like scale, and applies a 200-point EQ-Bench penalty to Anthropic models to correct for Claude-judged family bias.

That does not mean GPT-5.2 will feel better than Opus 4.5 in every workflow. It does mean GPT-5.2 looked extremely strong on the measured EQ signals AI IQ tracks.

The cost-performance story

January made the cost-performance conversation more serious.

GPT-5.2 and Gemini 3 Pro were better models overall. But they were not automatically the right models for every call in every workflow.

AI IQ’s effective-cost metric helps here because it does not stop at sticker price. It starts with the cost of a 2M input / 1M output workload, then adjusts by token usage efficiency so token-hungry models are penalized and token-efficient models get credit.

That changes the model-selection problem.

For high-stakes reasoning, GPT-5.2 was worth paying for.

For programmatic reasoning and multimodal coding workflows, Gemini 3 Pro was hard to ignore.

For long-running coding agents and professional workflows, Opus 4.5 remained an important option.

For open-weight agentic work, Kimi K2.5 became the model to test.

For coding-agent experimentation and practical engineering workflows, GLM-4.7 and MiniMax-M2.1 were relevant even though they were December releases.

For lower-latency, high-frequency tasks, GLM-4.7-Flash pointed toward the cheaper end of the routing stack.

The better setup was not one model. It was routing.

The bigger January theme: open-weight models became more practical

The main January pattern was not “China caught OpenAI.”

That would be too strong.

The top was still mostly closed. GPT-5.2 and Gemini 3 Pro were ahead overall, and Opus 4.5 remained one of the most useful professional-agent models.

The better read is that open-weight and frontier-adjacent models became more practical.

That is different from being the best.

A practical infrastructure model needs to be cheap enough, fast enough, available enough, customizable enough, and capable enough. It does not need to win every benchmark. It needs to handle large volumes of useful work without forcing teams to send every intermediate step to the most expensive frontier API.

Kimi K2.5, GLM-4.7, MiniMax-M2.1, and GLM-4.7-Flash all pointed in that direction.

Kimi pushed toward open multimodal agents and parallel sub-agent execution.

GLM pushed toward open-weight coding, reasoning, and faster deployment tiers.

MiniMax pushed toward practical coding-agent generalization across programming languages, tools, and scaffolds.

That was the January story.

The frontier did not move much. The layer underneath it got more usable.

What to watch next

The first thing to watch is whether Kimi K2.5 gets real adoption inside coding-agent and office-agent products. A model can look good in a launch post and still fail to become part of actual workflows. The real signal will be whether developers and teams route meaningful work through it.

The second thing to watch is whether Agent Swarm becomes a serious pattern or stays mostly a demo. Parallel agents are compelling, but they introduce coordination overhead, verification problems, and new failure modes. If the pattern works, it could become one of the more important agent architectures of 2026.

The third thing to watch is GLM-4.7-Flash-style routing. The market needs cheap, fast, good-enough models just as much as it needs frontier reasoning models.

The fourth thing to watch is MiniMax’s practical coding-agent direction. M2.1 was not a January release, but its emphasis on multi-language work, scaffold generalization, and office workflows was exactly where model evaluation needs to go.

The fifth thing to watch is the next true frontier release. January did not bring one. The next OpenAI, Anthropic, or Google release could quickly change the top of the AI IQ ranking.

Bottom line

January 2026 did not give us a new overall champion.

GPT-5.2 remained the best overall model in AI IQ’s January-window ranking.

Gemini 3 Pro remained one of the strongest models overall and had the best case on programmatic reasoning.

Opus 4.5 stayed important for coding agents, computer use, and long-running professional work.

Kimi K2.5 was the best true January release.

GLM-4.7-Flash was the month’s practical speed-and-throughput release.

GLM-4.7 and MiniMax-M2.1 were late-December releases, not January releases, but both shaped the January conversation around open-weight coding and agentic workflows.

The practical takeaway is simple: January made routing more important.

For the hardest work, pay for the frontier. For coding-heavy, latency-sensitive, open-weight, or high-volume agent workflows, the January and late-December models deserved real evaluation.

The best model did not change.

The set of models worth using did.